With the brand new enhancements to Azure OpenAI Service Provisioned providing, we’re taking a giant step ahead in making AI accessible and enterprise-ready.

In right this moment’s fast-evolving digital panorama, enterprises want extra than simply highly effective AI fashions—they want AI options which might be adaptable, dependable, and scalable. With upcoming availability of Knowledge Zones and new enhancements to Provisioned providing in Azure OpenAI Service, we’re taking a giant step ahead in making AI broadly obtainable and in addition enterprise-ready. These options symbolize a elementary shift in how organizations can deploy, handle, and optimize generative AI fashions.

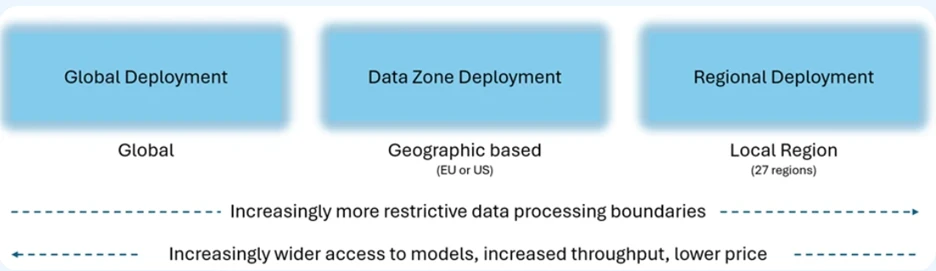

With the launch of Azure OpenAI Service Knowledge Zones within the European Union and the US, enterprises can now scale their AI workloads with even larger ease whereas sustaining compliance with regional knowledge residency necessities. Traditionally, variances in model-region availability compelled clients to handle a number of assets, typically slowing down growth and complicating operations. Azure OpenAI Service Knowledge Zones can take away that friction by providing versatile, multi-regional knowledge processing whereas making certain knowledge is processed and saved throughout the chosen knowledge boundary.

It is a compliance win which additionally permits companies to seamlessly scale their AI operations throughout areas, optimizing for each efficiency and reliability with out having to navigate the complexities of managing site visitors throughout disparate programs.

Leya, a tech startup constructing genAI platform for authorized professionals, has been exploring Knowledge Zones deployment choice.

“Azure OpenAI Service Knowledge Zones deployment choice gives Leya a cost-efficient option to securely scale AI purposes to hundreds of attorneys, making certain compliance and prime efficiency. It helps us obtain higher buyer high quality and management, with fast entry to the most recent Azure OpenAI improvements.“—Sigge Labor, CTO, Leya

Knowledge Zones will probably be obtainable for each Customary (PayGo) and Provisioned choices, beginning this week on November 1, 2024.

Trade main efficiency

Enterprises rely upon predictability, particularly when deploying mission-critical purposes. That’s why we’re introducing a 99% latency service stage settlement for token era. This latency SLA ensures that tokens are generated at a quicker and extra constant speeds, particularly at excessive volumes

The Provisioned provide gives predictable efficiency in your software. Whether or not you’re in e-commerce, healthcare, or monetary providers, the flexibility to rely upon low-latency and high-reliability AI infrastructure interprets instantly to raised buyer experiences and extra environment friendly operations.

Decreasing the price of getting began

To make it simpler to check, scale, and handle, we’re lowering hourly pricing for Provisioned International and Provisioned Knowledge Zone deployments beginning November 1, 2024. This discount in price ensures that our clients can profit from these new options with out the burden of excessive bills. Provisioned providing continues to supply reductions for month-to-month and annual commitments.

| Deployment choice | Hourly PTU | One month reservation per PTU | One 12 months reservation per PTU |

| Provisioned International | Present: $2.00 per hour November 1, 2024: $1.00 per hour |

$260 monthly | $221 monthly |

| Provisioned Knowledge ZoneNew | November 1, 2024: $1.10 per hour | $260 monthly | $221 monthly |

We’re additionally lowering deployment minimal entry factors for Provisioned International deployment by 70% and scaling increments by as much as 90%, reducing the barrier for companies to get began with Provisioned providing earlier of their growth lifecycle.

Deployment amount minimums and increments for Provisioned providing

| Mannequin | International | Knowledge Zone New | Regional |

| GPT-4o | Min: Increment |

Min: 15 Increment 5 |

Min: 50 Increment 50 |

| GPT-4o-mini | Min: Increment: |

Min: 15 Increment 5 |

Min: 25 Increment: 25 |

For builders and IT groups, this implies quicker time-to-deployment and fewer friction when transitioning from Customary to Provisioned providing. As companies develop, these easy transitions turn out to be important to sustaining agility whereas scaling AI purposes globally.

Effectivity by means of caching: A game-changer for high-volume purposes

One other new function is Immediate Caching, which gives cheaper and quicker inference for repetitive API requests. Cached tokens are 50% off for Customary. For purposes that continuously ship the identical system prompts and directions, this enchancment gives a major price and efficiency benefit.

By caching prompts, organizations can maximize their throughput while not having to reprocess similar requests repeatedly, all whereas lowering prices. That is significantly helpful for high-traffic environments, the place even slight efficiency boosts can translate into tangible enterprise beneficial properties.

A brand new period of mannequin flexibility and efficiency

One of many key advantages of the Provisioned providing is that it’s versatile, with one easy hourly, month-to-month, and yearly value that applies to all obtainable fashions. We’ve additionally heard your suggestions that it’s obscure what number of tokens per minute (TPM) you get for every mannequin on Provisioned deployments. We now present a simplified view of the variety of enter and output tokens per minute for every Provisioned deployment. Prospects now not must depend on detailed conversion tables or calculators.

We’re sustaining the flexibleness that clients love with the Provisioned providing. With month-to-month and annual commitments you possibly can nonetheless change the mannequin and model—like GPT-4o and GPT-4o-mini—throughout the reservation interval with out dropping any low cost. This agility permits companies to experiment, iterate, and evolve their AI deployments with out incurring pointless prices or having to restructure their infrastructure.

Enterprise readiness in motion

Azure OpenAI’s steady improvements aren’t simply theoretical; they’re already delivering leads to varied industries. As an example, corporations like AT&T, H&R Block, Mercedes, and extra are utilizing Azure OpenAI Service not simply as a software, however as a transformational asset that reshapes how they function and interact with clients.

Past fashions: The enterprise-grade promise

It’s clear that the way forward for AI is about far more than simply providing the most recent fashions. Whereas highly effective fashions like GPT-4o and GPT-4o-mini present the inspiration, it’s the supporting infrastructure—akin to Provisioned providing, Knowledge Zones deployment choice, SLAs, caching, and simplified deployment flows—that actually make Azure OpenAI Service enterprise-ready.

Microsoft’s imaginative and prescient is to offer not solely cutting-edge AI fashions but additionally the enterprise-grade instruments and assist that enable companies to scale these fashions confidently, securely, and cost-effectively. From enabling low-latency, high-reliability deployments to providing versatile and simplified infrastructure, Azure OpenAI Service empowers enterprises to completely embrace the way forward for AI-driven innovation.

Get began right this moment

Because the AI panorama continues to evolve, the necessity for scalable, versatile, and dependable AI options turns into much more important for enterprise success. With the most recent enhancements to Azure OpenAI Service, Microsoft is delivering on that promise—giving clients not simply entry to world-class AI fashions, however the instruments and infrastructure to operationalize them at scale.

Now’s the time for companies to unlock the complete potential of generative AI with Azure, transferring past experimentation into real-world, enterprise-grade purposes that drive measurable outcomes. Whether or not you’re scaling a digital assistant, growing real-time voice purposes, or remodeling customer support with AI, Azure OpenAI Service gives the enterprise-ready platform it’s good to innovate and develop.